Managing Vendor Risk: Is Your AI Supply Chain Secure?

Your CRM uses AI to score leads. That AI runs on a model from a third-party provider. The model was trained on data from another company. If any link in that chain is compromised, your customer data could be exposed.

Welcome to the AI supply chain—a complex web of vendors, sub-processors, and dependencies that most businesses have never mapped, let alone secured.

At CloudGeeks, cybersecurity is one of our core pillars. We’ve seen businesses shocked to discover their “secure” SaaS tool was sending data to unknown AI providers. Here’s how to assess and manage AI vendor risk before it becomes a crisis.

The AI Supply Chain Explained

Traditional Software vs. AI-Enabled Software

Traditional SaaS is relatively simple:

- You → SaaS Vendor → Their Infrastructure

AI-enabled SaaS is more complex:

- You → SaaS Vendor → Their Infrastructure → AI API Provider → AI Model Provider → Training Data Sources

Each node is a potential risk point.

Real Example: The AI CRM Chain

Consider a popular CRM with AI features:

Layer 1: Your Company

- Inputs customer data, uses AI features

- Assumes CRM vendor is responsible for security

Layer 2: CRM Vendor

- Stores your data

- Calls AI APIs for intelligent features

- May use multiple AI providers for different features

Layer 3: AI API Provider (e.g., OpenAI, Anthropic, Google)

- Processes your data when AI features activate

- Has their own data handling policies

- May be different from who you think

Layer 4: Infrastructure Provider

- Cloud hosting (AWS, Azure, Google Cloud)

- May be in different regions than CRM vendor claims

- Has access to data in some form

Layer 5: Model Training Pipeline

- Original training data for AI models

- May include data from previous customers

- Potential source of data leakage

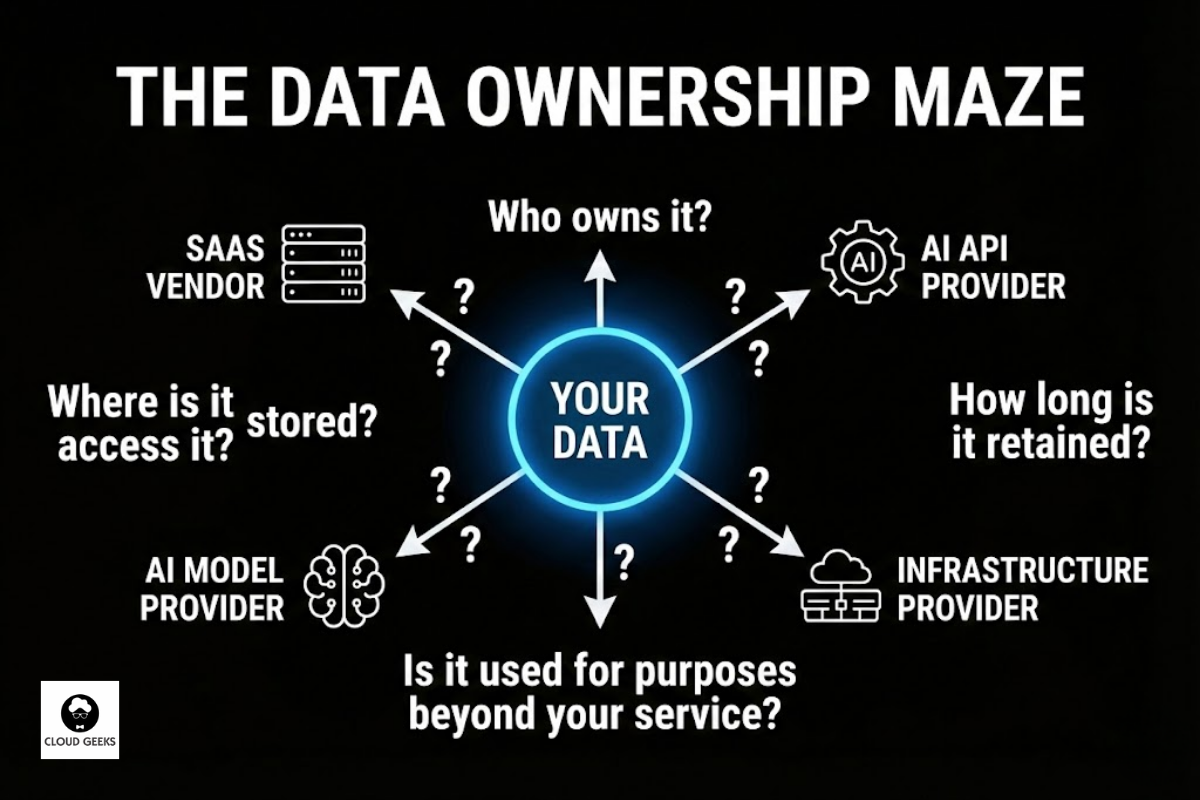

The Data Ownership Maze

When your data flows through this chain:

- Who owns it at each stage?

- Who can access it?

- Where is it stored?

- How long is it retained?

- Is it used for purposes beyond your service?

Most businesses can’t answer these questions. That’s the problem.

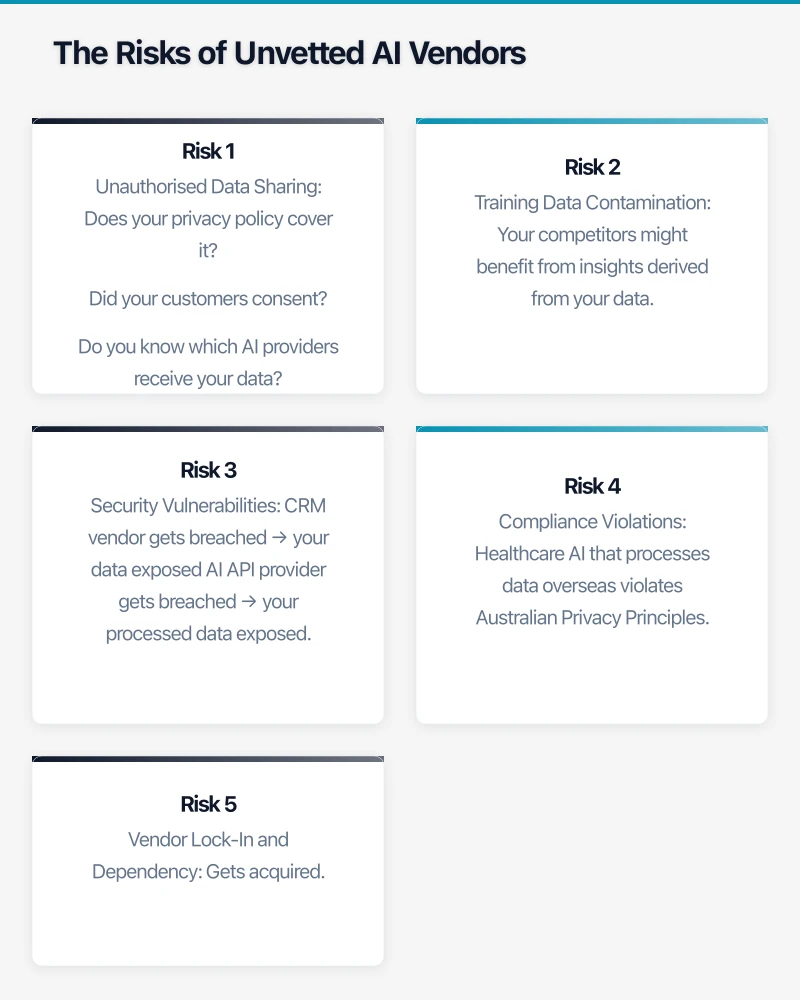

The Risks of Unvetted AI Vendors

Risk 1: Unauthorised Data Sharing

Many AI features work by sending your data to third-party AI providers. Your CRM vendor’s contract might allow this, but:

- Does your privacy policy cover it?

- Did your customers consent?

- Do you know which AI providers receive your data?

Risk 2: Training Data Contamination

Some AI providers use customer inputs to improve their models. This means:

- Your competitors might benefit from insights derived from your data

- Sensitive information could resurface in other customers’ outputs

- Data deletion requests may be impossible to fully satisfy

Risk 3: Security Vulnerabilities

Each vendor in the chain is a potential breach point:

- CRM vendor gets breached → your data exposed

- AI API provider gets breached → your processed data exposed

- Infrastructure provider misconfigured → data accessible

The attack surface multiplies with each additional vendor.

Risk 4: Compliance Violations

If any link in the chain doesn’t meet your compliance requirements:

- Healthcare AI that processes data overseas violates Australian Privacy Principles

- Financial AI that lacks adequate security violates APRA requirements

- Government contractor using non-sovereign AI violates contract terms

You’re responsible even if the violation occurs in your supply chain.

Risk 5: Vendor Lock-In and Dependency

If your AI vendor:

- Gets acquired by a competitor

- Changes pricing dramatically

- Discontinues service

- Gets sanctioned or restricted

Your business operations could be significantly disrupted.

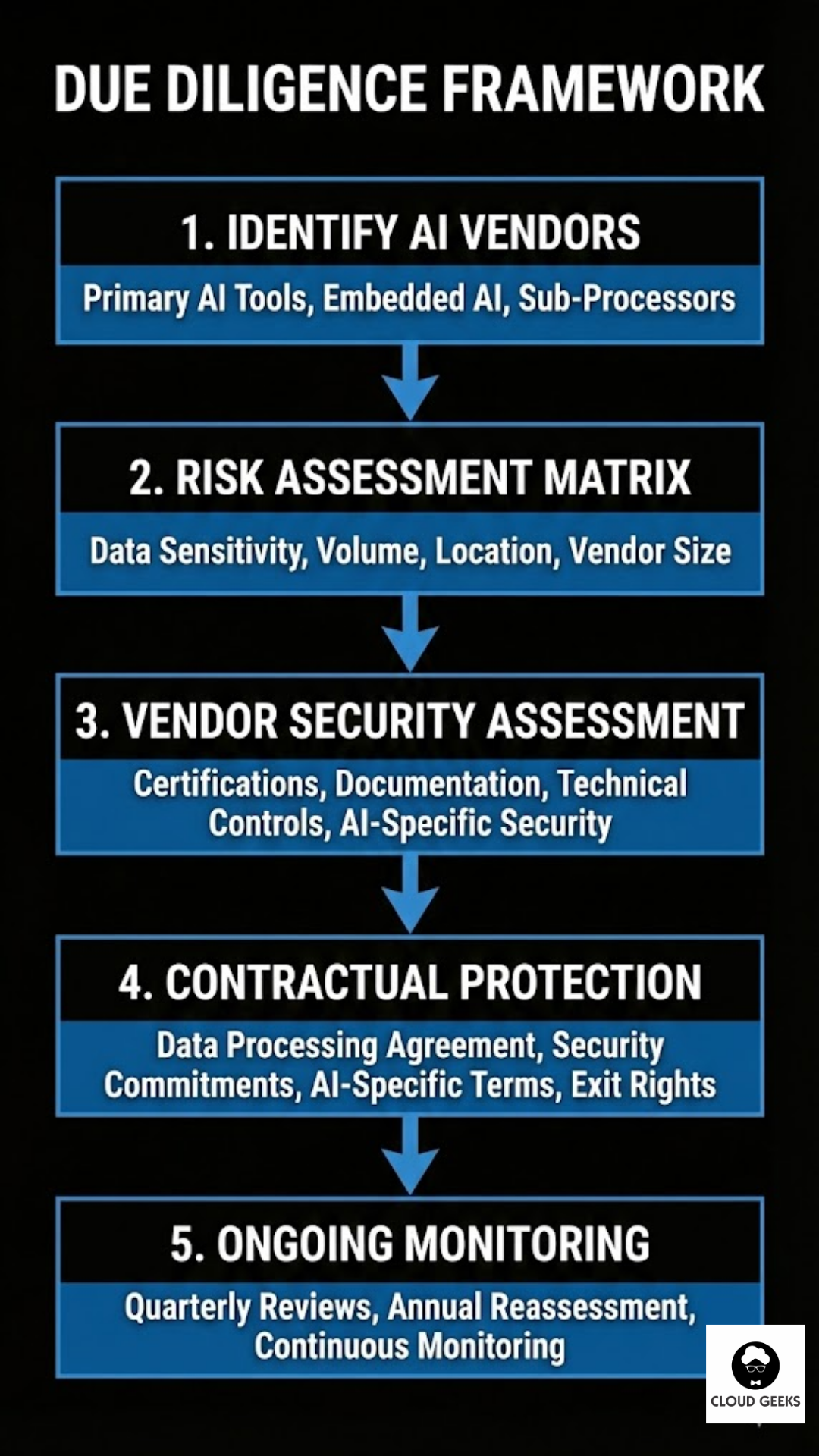

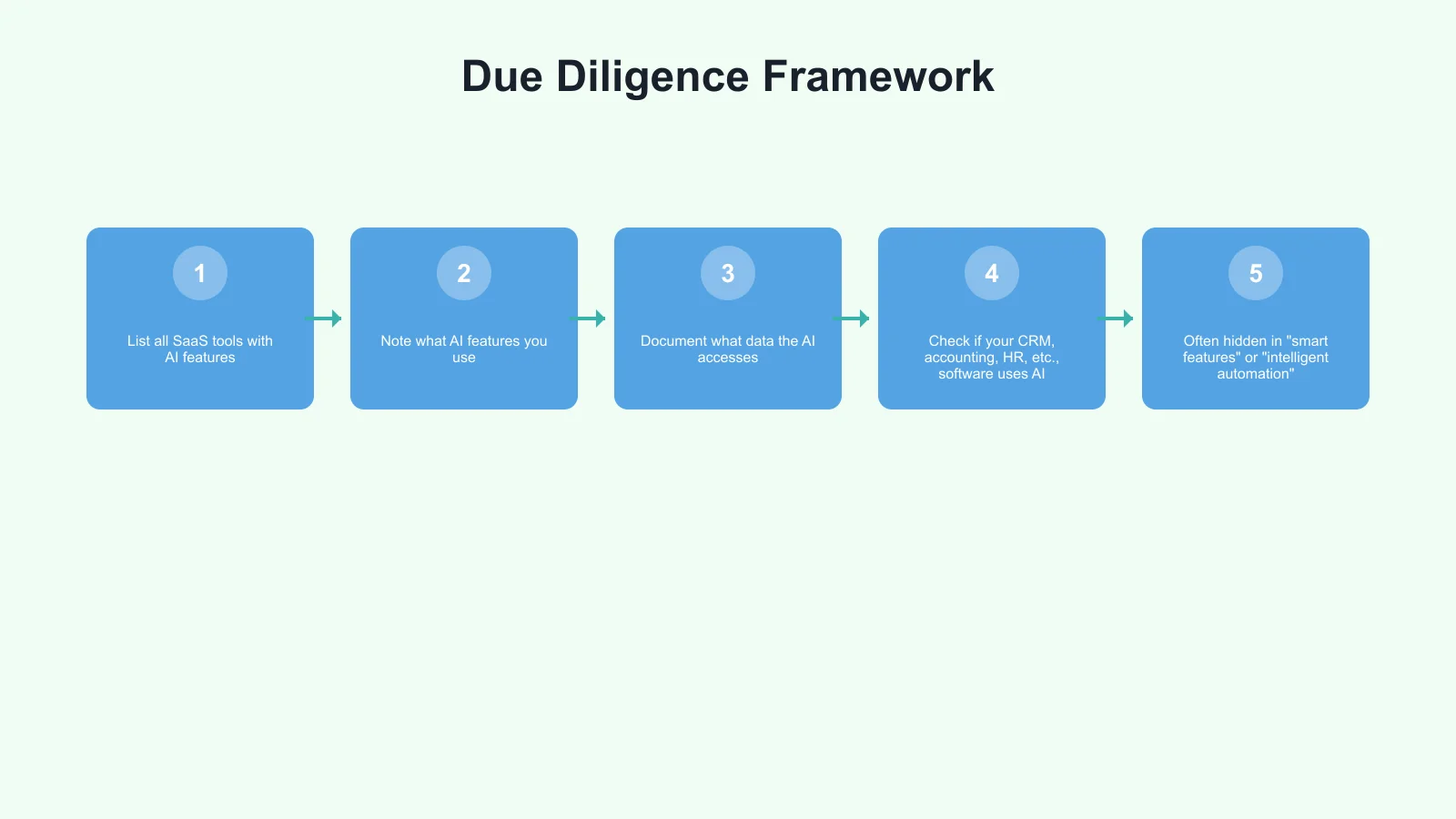

Due Diligence Framework

Step 1: Identify Your AI Vendors

Create a complete inventory:

Primary AI Tools (you contracted directly):

- List all SaaS tools with AI features

- Note what AI features you use

- Document what data the AI accesses

Embedded AI (within tools you use):

- Check if your CRM, accounting, HR, etc., software uses AI

- Often hidden in “smart features” or “intelligent automation”

- May have been added in updates without notice

Sub-Processors (vendors’ vendors):

- Request sub-processor lists from primary vendors

- Identify which sub-processors handle AI functions

- Note geographic locations

Step 2: Risk Assessment Matrix

For each AI vendor/feature, assess:

| Factor | Low Risk | Medium Risk | High Risk |

|---|---|---|---|

| Data Sensitivity | Public info only | Internal business data | Personal/financial data |

| Data Volume | Occasional use | Regular use | Continuous processing |

| Processing Location | Australia only | Australia + trusted countries | Unknown/global |

| Vendor Size | Large established | Mid-sized | Startup/unknown |

| Replaceability | Many alternatives | Some alternatives | No alternatives |

| Contractual Protection | Strong terms | Standard terms | Minimal terms |

Scoring: Assign 1 (Low), 2 (Medium), 3 (High) to each factor. Total score indicates overall risk level.

Risk Tiers:

- 6-9: Low Risk (standard monitoring)

- 10-14: Medium Risk (enhanced review)

- 15-18: High Risk (intensive management or reconsider use)

Step 3: Vendor Security Assessment

For medium and high-risk vendors, request:

Security Certifications:

- ISO 27001 (information security management)

- SOC 2 Type II (service organisation controls)

- IRAP (if handling government data)

- Industry-specific certifications (PCI-DSS, HIPAA equivalent, etc.)

Security Documentation:

- Security whitepaper or overview

- Penetration testing summary (not full report)

- Incident history and response process

- Employee security practices

Technical Controls:

- Encryption (at rest and in transit)

- Access control mechanisms

- Audit logging capabilities

- Data segregation approach

AI-Specific Security:

- Model security (protection against prompt injection, etc.)

- Training data governance

- Output filtering and safety measures

- Data retention in AI processing

Step 4: Contractual Protection

Ensure contracts include:

Data Processing Agreement:

- Clear definition of permitted uses

- Data ownership confirmation (you own your data)

- Sub-processor notification requirements

- Data deletion upon termination

Security Commitments:

- Minimum security standards

- Breach notification timeline (ideally 24-48 hours)

- Right to audit or receive audit reports

- Liability for security failures

AI-Specific Terms:

- No use of your data for model training

- Transparency about AI sub-processors

- Geographic restrictions on processing

- Right to opt out of AI features

Exit Rights:

- Data portability in usable formats

- Reasonable transition assistance

- No hostage data situations

Step 5: Ongoing Monitoring

Vendor risk isn’t one-time. Establish:

Quarterly Reviews:

- Any security incidents reported?

- Any significant vendor changes?

- Any new sub-processors added?

- Any compliance certifications lapsed?

Annual Reassessment:

- Full risk assessment update

- Contract renewal review

- Alternative vendor evaluation

- Alignment with current business needs

Continuous Monitoring:

- News alerts for vendor security incidents

- Notification tracking for policy changes

- Sub-processor list monitoring

- User access reviews

Practical Due Diligence Questions

Questions for Sales/Account Teams

- “Does your product use AI or machine learning features?”

- “Which AI providers power those features?”

- “Where is our data processed for AI functions?”

- “Is our data used to train your AI models?”

- “Can we opt out of AI processing entirely?”

Questions for Security Teams

- “What certifications does your AI processing hold?”

- “How is data encrypted during AI processing?”

- “What’s your AI-specific incident response?”

- “How do you secure against prompt injection and model attacks?”

- “Can you provide penetration testing results for AI components?”

Questions for Legal/Compliance

- “Who owns outputs generated using our data?”

- “What’s your liability for AI-generated errors?”

- “How do you comply with Australian Privacy Principles?”

- “What indemnification do you provide for AI-related issues?”

- “How do you handle deletion requests in AI systems?”

Red Flags

Watch for:

- Vague answers about AI providers (“we use industry-standard AI”)

- Resistance to discussing sub-processors

- No written documentation of AI data handling

- Unable to specify processing locations

- No certifications for AI-specific components

- Contracts that allow unlimited data use

Industry-Specific Considerations

Healthcare

Additional requirements:

- My Health Record compatibility

- Australian Privacy Principles (APP) compliance

- State health records legislation

- Professional body guidelines on AI use

Financial Services

Additional requirements:

- APRA Prudential Standard CPS 234 (Information Security)

- ASIC regulatory requirements

- Privacy Act implications for financial data

- Potential for AI decisions to constitute financial advice

Legal

Additional requirements:

- Legal professional privilege considerations

- Confidentiality obligations to clients

- Jurisdictional issues with overseas processing

- Professional conduct rules on technology use

Government Contractors

Additional requirements:

- PSPF (Protective Security Policy Framework)

- ISM (Information Security Manual) alignment

- IRAP certification requirements

- Data sovereignty mandates

Managing Existing Vendor Relationships

What If You’ve Already Deployed?

Immediate Actions:

- Audit current AI data flows

- Review contracts for AI-related terms

- Request vendor security documentation

- Assess against risk matrix

Remediation Options:

Low-Risk Vendors:

- Continue use with standard monitoring

- Ensure contracts are updated at renewal

- Track any reported incidents

Medium-Risk Vendors:

- Request additional security commitments

- Negotiate contract amendments

- Implement additional controls (e.g., data minimisation)

- Establish escalation path for concerns

High-Risk Vendors:

- Develop migration plan to alternatives

- Restrict use to lowest-sensitivity data

- Implement compensating controls

- Accelerate contract exit if needed

Negotiating with Existing Vendors

Leverage Points:

- Upcoming renewal discussions

- Vendor’s desire to maintain relationship

- New regulatory requirements

- Industry incidents raising awareness

Reasonable Asks:

- Data Processing Agreement with AI terms

- Annual security attestation

- Sub-processor notification

- Data deletion certification

May Be Difficult:

- Changing fundamental AI architecture

- Custom security certifications

- Exclusive data processing terms

- Significant price reductions for added compliance

Building Organisational Capability

Roles and Responsibilities

Procurement/Finance:

- Ensure vendor assessments before purchasing

- Include security/AI terms in contracts

- Track vendor renewal dates

IT/Security:

- Conduct technical assessments

- Monitor for security incidents

- Maintain vendor risk register

Legal/Compliance:

- Review contract terms

- Assess regulatory alignment

- Advise on risk acceptance

Business Users:

- Report new AI tools (no shadow AI)

- Flag vendor concerns

- Provide input on vendor effectiveness

Documentation Requirements

Maintain:

- Vendor inventory (including AI features)

- Risk assessments for each vendor

- Contract summaries with key terms

- Incident logs and responses

- Audit reports and certifications

- Communication records

Incident Response Integration

When a vendor incident occurs:

- Assess impact on your data

- Document timeline and facts

- Notify affected parties as required

- Implement containment measures

- Review vendor relationship

- Update risk assessment

The Cost of Ignoring Vendor Risk

Direct Costs:

- Breach notification and response

- Regulatory fines and penalties

- Legal fees and settlements

- Remediation and recovery

Indirect Costs:

- Customer trust damage

- Business disruption

- Competitive disadvantage

- Management distraction

- Employee morale impact

Example: A mid-sized Australian business experienced a breach through an AI vendor. Total cost: $340,000 in direct expenses, estimated $1.2M in lost business over two years.

The cost of proper vendor due diligence: Perhaps $10,000-20,000 annually.

Action Plan

This Week

- List all SaaS tools with AI features your business uses

- Identify which handle sensitive data

- Check one high-risk vendor’s security documentation

This Month

- Complete full AI vendor inventory

- Risk-assess top 5 critical vendors

- Request security documentation from high-risk vendors

- Review contracts for AI-specific terms

This Quarter

- Implement vendor risk register

- Establish quarterly review cadence

- Update procurement process to include AI due diligence

- Train staff on shadow AI risks

Ongoing

- Monitor vendor security posture

- Track sub-processor changes

- Reassess at contract renewals

- Update based on regulatory changes

Conclusion

Your AI supply chain is only as secure as its weakest link. In an era where every SaaS tool seems to be adding AI features, that chain is getting longer and more complex.

But the solution isn’t to avoid AI—it’s to manage it intelligently. With proper due diligence, contractual protection, and ongoing monitoring, you can capture AI benefits while managing supply chain risks.

Start mapping your AI supply chain today. You might be surprised what you find.

Ready to assess your AI vendor risk? Contact CloudGeeks for a comprehensive AI supply chain analysis. We’ll help you identify risks, strengthen contracts, and build sustainable vendor management practices. Because in cybersecurity, what you don’t know can hurt you.

Your security is only as strong as your weakest vendor.

Related Articles

- The Human-in-the-Loop: A Governance Framework for Aussie SMBs

- Building a Cybersecurity-First Culture in the Age of AI

- Data Sovereignty 101: Keeping Your AI Australian

- AI Tools for Australian SMBs: Practical Guide with Real Case Studies

- Microsoft Copilot vs Google Gemini: The Battle for the Australian Office