The Human-in-the-Loop: A Governance Framework for Aussie SMBs

Your employees are using AI right now. The question isn’t whether—it’s whether you know about it, control it, or are exposed to risks you haven’t considered.

“Shadow AI” is the term for unauthorised AI tool usage in organisations. It’s the receptionist pasting customer complaints into ChatGPT. It’s the sales rep using an AI email writer with your CRM data. It’s the accountant uploading financials to an AI analysis tool.

Each instance is potentially a privacy breach, a security hole, or a compliance violation. And it’s happening in almost every Australian business.

At CloudGeeks, we’ve helped businesses transform AI chaos into AI governance. Here’s how to create a “Human-in-the-Loop” framework that harnesses AI benefits while managing risks.

The Shadow AI Problem

What’s Actually Happening

Research from 2024 indicates that over 60% of employees have used generative AI for work purposes. Most did so without employer knowledge or approval.

Common Shadow AI Activities:

- Writing or editing emails and documents

- Summarising meeting notes

- Analysing customer feedback

- Generating marketing content

- Coding and technical work

- Creating presentations

- Financial analysis and modelling

Why It’s Dangerous

Privacy Breaches: Personal customer data pasted into external AI tools may violate privacy laws.

Data Leakage: Sensitive business information sent to public AI tools becomes potential training data.

Accuracy Risks: AI outputs used without verification can propagate errors into business decisions.

Legal Liability: AI-generated content may infringe copyright or contain defamatory statements.

Contract Violations: Using AI may breach client confidentiality agreements.

Regulatory Exposure: Certain industries have specific requirements around AI usage.

Why Employees Do It

Don’t assume malice. Employees use Shadow AI because:

- It genuinely makes them more productive

- They don’t know it’s problematic

- No approved alternatives exist

- They face pressure to deliver more, faster

- They’ve seen others do it without consequences

Understanding motivation helps design policies that work.

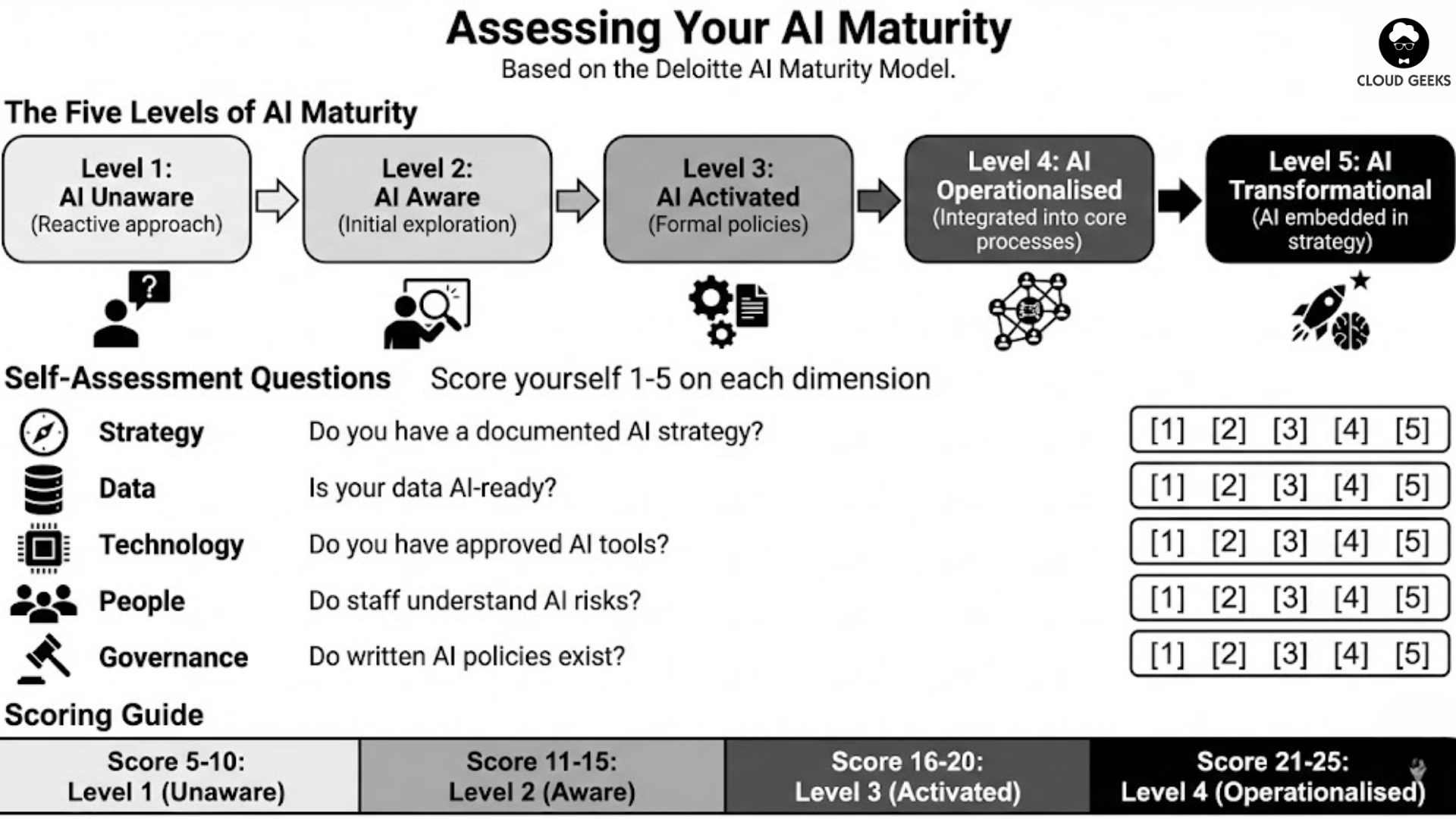

Assessing Your AI Maturity

Before creating governance, understand where you stand. The Deloitte AI Maturity Model provides a useful framework.

The Five Levels

Level 1: AI Unaware

- No formal AI strategy

- Shadow AI likely prevalent

- No policies or guidelines

- Reactive approach to AI risks

Level 2: AI Aware

- Leadership recognises AI importance

- Initial exploration of AI tools

- Basic awareness of risks

- Beginning policy development

Level 3: AI Activated

- Specific AI use cases implemented

- Formal AI policies in place

- Designated AI responsibility

- Measured outcomes

Level 4: AI Operationalised

- AI integrated into core processes

- Comprehensive governance framework

- Regular monitoring and adjustment

- Clear ROI demonstrated

Level 5: AI Transformational

- AI embedded in strategy

- Continuous innovation with AI

- Industry-leading practices

- AI as competitive advantage

Self-Assessment Questions

Score yourself 1-5 on each dimension:

Strategy

- Do you have a documented AI strategy?

- Is AI discussed at leadership level?

- Are AI investments prioritised?

Data

- Is your data AI-ready (clean, accessible)?

- Do you know where sensitive data is?

- Can you audit data usage?

Technology

- Have you evaluated AI platforms?

- Do you have approved AI tools?

- Can you enforce technology policies?

People

- Do staff understand AI capabilities and risks?

- Is there designated AI responsibility?

- Can employees get AI guidance?

Governance

- Do written AI policies exist?

- Are there consequences for violations?

- Is AI usage monitored?

Score 5-10: Level 1 (Unaware) Score 11-15: Level 2 (Aware) Score 16-20: Level 3 (Activated) Score 21-25: Level 4 (Operationalised)

Most Australian SMBs score Level 1-2. Don’t be discouraged—awareness is the first step.

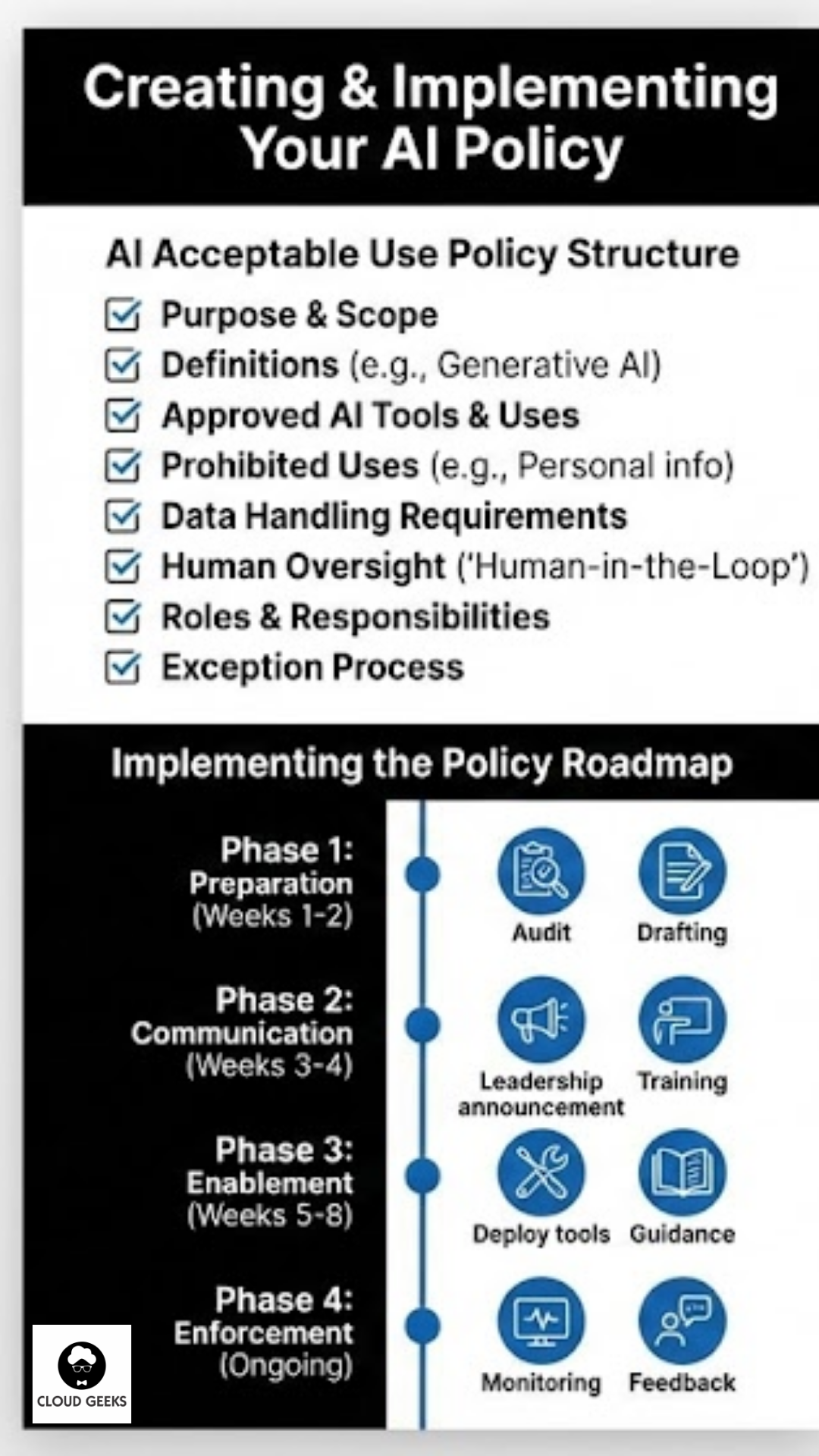

Creating Your AI Acceptable Use Policy

Policy Structure

A practical AI Acceptable Use Policy includes:

- Purpose and Scope

- Definitions

- Approved AI Tools

- Prohibited Uses

- Data Handling Requirements

- Human Oversight Requirements

- Roles and Responsibilities

- Compliance and Consequences

- Exception Process

- Review and Updates

Section-by-Section Guide

1. Purpose and Scope

Sample Text:

This policy governs the use of Artificial Intelligence (AI) tools by all employees, contractors, and third parties acting on behalf of [Company Name]. It applies to all AI tools, including but not limited to generative AI (ChatGPT, Claude, Gemini), AI features in existing software, and specialised AI applications.

The purpose is to enable productive AI use while protecting the company, our customers, and our employees from AI-related risks.

2. Definitions

Include:

- AI and Artificial Intelligence

- Generative AI

- Personal Information

- Sensitive Information

- Company Data

- Approved Tools

- Shadow AI

Example:

“Generative AI” means AI systems that create new content, including text, images, code, or other outputs, based on user prompts. This includes ChatGPT, Claude, Gemini, Copilot, and similar tools.

3. Approved AI Tools

List specifically approved tools and their permitted uses:

| Tool | Approved Uses | Restrictions |

|---|---|---|

| Microsoft Copilot | Document drafting, email assistance, Excel analysis | No external customer data without de-identification |

| Xero AI (JAX) | Financial queries, invoice management | Normal accounting data only, per Xero agreement |

| Canva Magic Studio | Marketing content, internal documents | No confidential information in prompts |

| [Your CRM] AI features | Lead scoring, email templates | Within existing CRM data governance |

Key Principle: If it’s not on the approved list, it’s not approved.

4. Prohibited Uses

Be specific about what’s forbidden:

Absolutely Prohibited:

- Entering personal customer information into non-approved AI tools

- Using AI for decisions that significantly affect individuals without human review

- Submitting confidential business information to public AI tools

- Using AI to generate misleading or deceptive content

- Claiming AI-generated work as original human creation where disclosure is required

- Using AI in ways that violate other company policies

Prohibited Unless Specifically Authorised:

- AI for customer-facing communications without human review

- AI for financial reporting or compliance documents

- AI for legal documents or advice

- AI for hiring or performance decisions

5. Data Handling Requirements

Categories:

Category A: Never input to any AI tool

- Customer health or medical information

- Complete customer databases

- Financial account details

- Employee personal files

- Passwords or access credentials

Category B: Approved AI tools only

- Customer contact information

- Transaction history

- Business financial data

- Internal communications

Category C: Any approved AI tool

- Publicly available information

- De-identified data

- Generic business content

- Non-sensitive internal information

6. Human Oversight Requirements

The “Human-in-the-Loop” Principle:

All AI outputs intended for external communication or significant business decisions must be reviewed by a qualified human before use.

Specific Requirements:

- Customer communications: Review by employee familiar with the customer relationship

- Financial analysis: Review by someone qualified to assess accuracy

- Legal content: Review by qualified professional before reliance

- Technical code: Testing and review before deployment

- Public content: Brand and accuracy review before publication

Documentation:

- For significant decisions, document that human review occurred

- Maintain audit trail of AI assistance and human oversight

7. Roles and Responsibilities

All Employees:

- Comply with this policy

- Report suspected violations

- Complete AI awareness training

- Ask if uncertain about AI use

Managers:

- Ensure team compliance

- Model appropriate AI use

- Address violations promptly

- Support training participation

AI Coordinator/Champion (designate someone):

- Maintain approved tools list

- Evaluate new AI tools

- Answer policy questions

- Monitor for Shadow AI

- Recommend policy updates

Leadership:

- Set AI strategy

- Resource AI governance

- Review policy annually

- Address significant incidents

8. Compliance and Consequences

Monitoring: The company may monitor AI tool usage through [network monitoring/software audits/regular reviews] to ensure policy compliance.

Violations: Policy violations will be addressed through the standard disciplinary process, with consequences ranging from additional training to termination depending on severity and intent.

Reporting: Employees who identify potential policy violations should report to [AI Coordinator/Manager/HR] without fear of retaliation.

9. Exception Process

When Exceptions May Be Granted:

- Pilot testing of new AI tools

- Specific project requirements

- Time-limited experiments

Process:

- Submit exception request to AI Coordinator

- Document proposed use, data involved, and risk mitigation

- Receive written approval before proceeding

- Report outcomes at conclusion

10. Review and Updates

This policy will be reviewed at least annually, or sooner if significant AI developments warrant. Employees will be notified of material changes.

Implementing the Policy

Phase 1: Preparation (Week 1-2)

- Audit current AI usage: Survey employees (anonymously if needed) about what AI tools they use

- Assess risks: Identify highest-risk current activities

- Select approved tools: Choose tools that meet security and compliance requirements

- Draft policy: Use framework above, customise for your business

Phase 2: Communication (Week 3-4)

- Leadership announcement: CEO/owner communicates AI governance importance

- Policy distribution: Share policy with all employees

- Q&A session: Address questions and concerns

- Training: Conduct initial AI awareness training

Phase 3: Enablement (Week 5-8)

- Deploy approved tools: Make sanctioned AI accessible

- Provide guidance: Create quick-reference materials for common tasks

- Establish support: Make AI Coordinator available for questions

- Monitor adoption: Track uptake of approved tools vs. shadow AI

Phase 4: Enforcement (Ongoing)

- Regular monitoring: Check for policy compliance

- Address violations: Handle consistently, focusing on education

- Gather feedback: Learn what’s working and what’s not

- Iterate: Update policy and approved tools based on experience

Common Implementation Challenges

Challenge: “But I’m More Productive with ChatGPT”

Response: Validate the productivity benefit while explaining the risk. Offer an approved alternative. If no approved alternative exists, evaluate adding one.

Key Point: Your goal is safe AI use, not no AI use.

Challenge: “This Is Too Bureaucratic”

Response: Start with a minimal viable policy. Complex governance can grow over time. A simple policy followed is better than a comprehensive policy ignored.

Challenge: “We Don’t Have Resources for AI Governance”

Response: Governance doesn’t require a team. Designate existing staff with partial responsibility. Use templates (like this article) to accelerate policy creation.

Challenge: “Leadership Doesn’t Understand AI Risks”

Response: Present specific scenarios relevant to your business. Focus on regulatory, reputational, and financial risks leadership already cares about. Connect AI governance to existing risk management.

Challenge: “Employees Will Just Ignore This”

Response: Combine policy with enablement. Provide good alternatives to Shadow AI. Create positive incentives for appropriate use. Make compliance easy.

Measuring Success

Leading Indicators

- Percentage of employees completing AI training

- Adoption rate of approved AI tools

- Policy acknowledgement rate

- Questions/requests to AI Coordinator

Lagging Indicators

- Shadow AI incidents detected

- Policy violations requiring action

- Security incidents involving AI

- Productivity measures in AI-enabled functions

Annual Review Questions

- Has the approved tools list kept pace with needs?

- Are employees finding the policy practical?

- Have we had incidents that reveal policy gaps?

- Are we realising productivity benefits from AI?

- Is our maturity level improving?

Resources

Policy Template

Download our AI Acceptable Use Policy template at CloudGeeks Resources (link to your actual resource).

Training Materials

- [Company] AI Quick Start Guide

- Data Classification Reference

- AI Tool Comparison Chart

- Incident Reporting Form

External Resources

- OAIC: Australian Privacy Principles guidelines

- AIIA: Australian AI Ethics Framework

- Deloitte: State of AI in the Enterprise reports

The Business Case for AI Governance

Investment in AI governance delivers:

Risk Reduction:

- Lower likelihood of privacy breaches

- Reduced regulatory exposure

- Decreased legal liability

Productivity Gains:

- Employees confident using approved tools

- Less time wasted on risky workarounds

- Clearer decision-making about AI use

Competitive Advantage:

- Faster safe AI adoption than uncontrolled competitors

- Ability to bid on governance-conscious contracts

- Foundation for advanced AI applications

Cultural Benefits:

- Innovation within boundaries

- Trust between employees and leadership

- Transparency about technology use

Conclusion

The “Human-in-the-Loop” isn’t about restricting AI—it’s about enabling it responsibly. Without governance, AI use happens anyway, just unsafely. With governance, you channel AI’s benefits while managing its risks.

Start simple:

- Assess your current AI maturity

- Create a basic acceptable use policy

- Designate someone to own AI governance

- Provide approved alternatives to shadow AI

- Iterate based on experience

The goal is progress, not perfection. A Level 2 organisation with basic governance is far safer than a Level 1 organisation where anything goes.

Ready to implement AI governance in your organisation? Contact CloudGeeks for assistance developing policies, selecting approved tools, and training your team on responsible AI use. We’ve helped numerous Australian businesses move from AI chaos to AI governance.

Keep humans in the loop. That’s where the wisdom lives.

Related Articles

- Managing Vendor Risk: Is Your AI Supply Chain Secure?

- Building a Cybersecurity-First Culture in the Age of AI

- Data Sovereignty 101: Keeping Your AI Australian

- AI for Australian Healthcare Practices: Scheduling, Privacy, and Patient Care

- AI Tools for Australian SMBs: Practical Guide with Real Case Studies